kubeadm是官方社区推出的一个用于快速部署kubernetes集群的工具。

这个工具能通过两条指令完成一个kubernetes集群的部署:

# 创建一个 Master 节点

kubeadm init

# 将一个 Node 节点加入到当前集群中

kubeadm join <Master节点的IP和端口 >

1. 安装要求

在开始之前,部署Kubernetes集群机器需要满足以下几个条件:

- 一台或多台机器,操作系统 CentOS7.x-86_x64

- 硬件配置:2GB或更多RAM,2个CPU或更多CPU,硬盘30GB或更多

- 可以访问外网,需要拉取镜像,如果服务器不能上网,需要提前下载镜像并导入节点

- 禁止swap分区

2. 准备环境

| 角色 | IP |

|---|---|

| master | 10.0.0.200 |

| node1 | 10.0.0.201 |

| node2 | 10.0.0.202 |

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config # 永久

setenforce 0 # 临时

# 关闭swap

swapoff -a # 临时

sed -ri 's/.*swap.*/#&/' /etc/fstab # 永久

# 根据规划设置主机名

# master

hostnamectl set-hostname master

# node1

hostnamectl set-hostname node1

# node2

hostnamectl set-hostname node2

# 在master添加hosts

cat >> /etc/hosts << EOF

10.0.0.200 master

10.0.0.201 node1

10.0.0.202 node2

EOF

# 将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system # 生效

# 时间同步

yum install ntpdate -y

ntpdate time.windows.com

3. 所有节点安装Docker/kubeadm/kubelet

Kubernetes默认CRI(容器运行时)为Docker,因此先安装Docker。

3.1 安装Docker

yum install -y wget

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

yum -y install docker

systemctl enable docker && systemctl start docker

$ cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]

}

EOF

3.2 添加阿里云YUM软件源

$ cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

3.3 安装kubeadm,kubelet和kubectl

由于版本更新频繁,这里可以指定版本号部署:

# 指定版本号,若不指定为latest

# yum install -y kubelet-1.16.3 kubeadm-1.16.3 kubectl-1.16.3

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet && systemctl start kubelet

4. 部署Kubernetes Master

在Master执行。

kubeadm init --apiserver-advertise-address=10.0.0.200 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.20.4 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16

# --apiserver-advertise-address API服务器所公布的其正在监听的IP地址

# --image-repository 选择用于拉取控制平面镜像的容器仓库,由于默认拉取镜像地址k8s.gcr.io国内无法访问,这里指定阿里云镜像仓库地址。

# --kubernetes-version 如果上方安装kubelet没有指定版本号,这里也可不指定,需要注意不同版本之间的兼容,不兼容会有报错,指定兼容版本即可。

# --service-cidr 为服务的虚拟 IP 地址另外指定 IP 地址段

# --pod-network-cidr 指明 pod 网络可以使用的 IP 地址段。

如果提示找不到coredns:v1.8.0,就先在kubeadm init后加 --ignore-preflight-errors=all忽略报错。kubeadm init结束后,继续后面的步骤。

使用kubectl工具:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

$ kubectl get nodes

5. 加入Kubernetes Node

在Node执行。

向集群添加新节点,执行在kubeadm init输出的kubeadm join命令:

$ kubeadm join 192.168.1.11:6443 --token esce21.q6hetwm8si29qxwn \

--discovery-token-ca-cert-hash sha256:00603a05805807501d7181c3d60b478788408cfe6cedefedb1f97569708be9c5

默认token有效期为24小时,当过期之后,该token就不可用了。这时就需要重新创建token,操作如下:

kubeadm token create --print-join-command

6. 部署CNI网络插件

vi kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unsed in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"cniVersion": "0.2.0",

"name": "cbr0",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: lizhenliang/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: lizhenliang/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

kubectl apply -f kube-flannel.yml

kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7f89b7bc75-5bk98 1/1 Running 0 22m

coredns-7f89b7bc75-cz8vq 1/1 Running 0 22m

etcd-master 1/1 Running 0 22m

kube-apiserver-master 1/1 Running 0 22m

kube-controller-manager-master 1/1 Running 0 22m

kube-flannel-ds-amd64-2cpdk 1/1 Running 0 7m3s

kube-flannel-ds-amd64-4xntj 1/1 Running 0 7m3s

kube-flannel-ds-amd64-khqfw 1/1 Running 0 7m3s

kube-proxy-7rc7h 1/1 Running 0 22m

kube-proxy-fncg8 1/1 Running 0 16m

kube-proxy-rd6kl 1/1 Running 0 16m

kube-scheduler-master 1/1 Running 0 22m

kubectl get pod -n kube-system -o wide

在kubectl get pods -n kube-system,如果发现有ImagePullBackOff或者ErrImagePull,例如coredns-545d6fc579-4jw9w镜像是ImagePullBackOff状态,那么执行

kubectl get pods coredns-545d6fc579-4jw9w -n kube-system -o yaml | grep image:

查看没有拉去下来的镜像的全称,例如:

registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0无法下载下来,然后手动docker pull拉取下来(有时候只是镜像的命名问题而提示找不到镜像):docker pull coredns/coredns:1.8.0,然后手动将拉去下来的镜像改以下tag:

sudo docker tag coredns/coredns:1.8.0 registry.aliyuncs.com/google_containers/coredns/coredns:v1.8.0

7. 测试kubernetes集群

在Kubernetes集群中创建一个pod,验证是否正常运行:

$ kubectl create deployment nginx --image=nginx

$ kubectl expose deployment nginx --port=80 --type=NodePort

$ kubectl get pod,svc

访问地址:http://NodeIP:Port

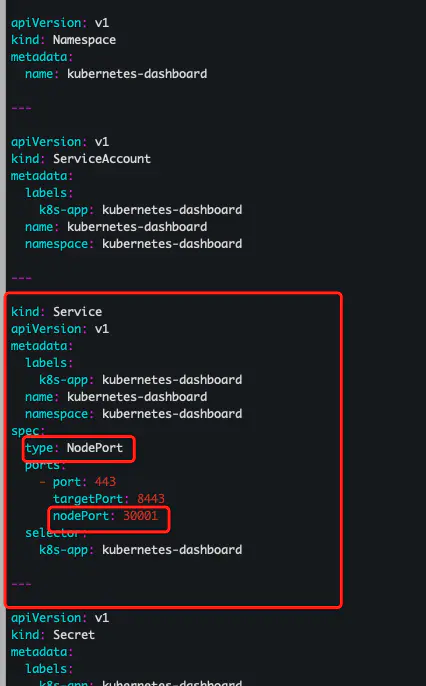

8. 安装dashboard

-

下载yaml

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.4/aio/deploy/recommended.yaml

关于raw.githubusercontent.com failed: Connection -

修改yaml

-

执行安装

kubectl apply -f recommended.yaml -

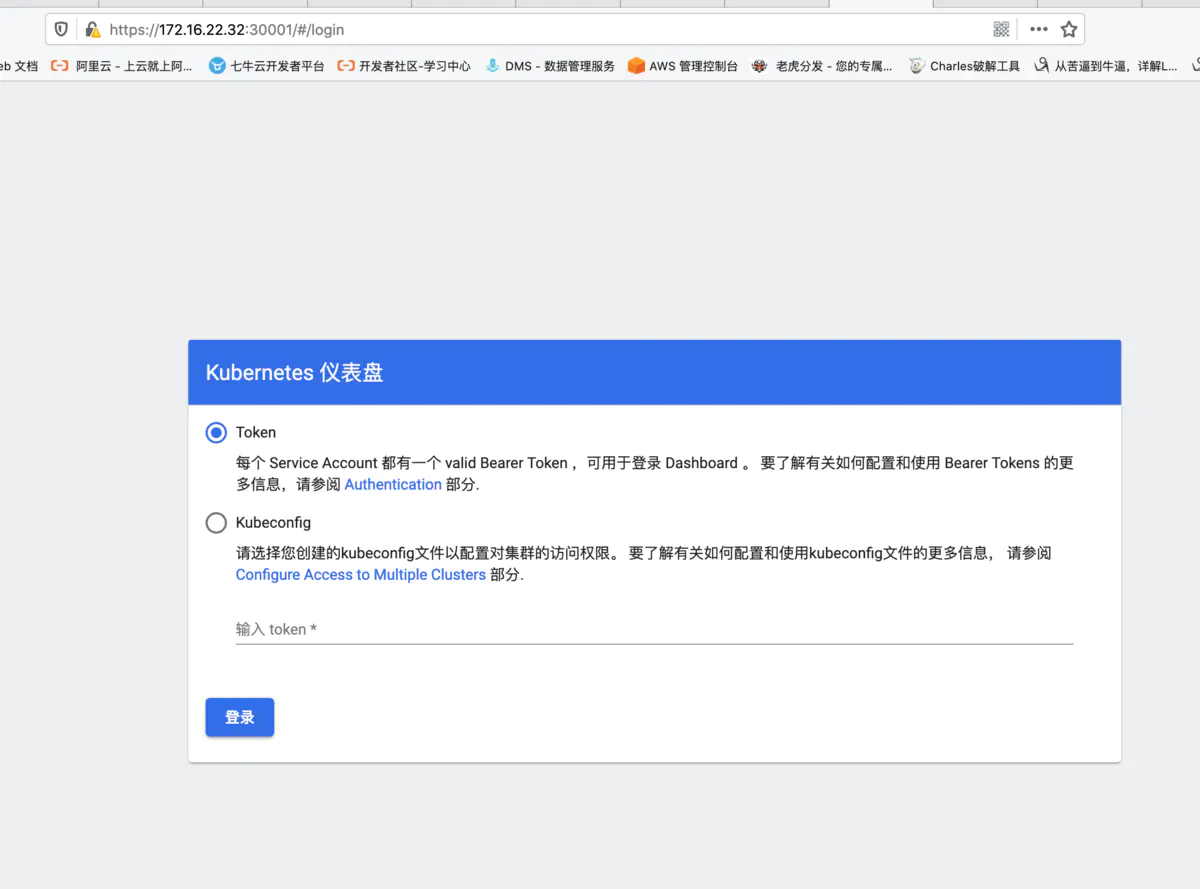

创建 serviceaccount

kubectl create serviceaccount dashboard-admin -n kube-system -

创建clusterrolebinding为dashboard sa授权集群权限cluster-admin

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin -

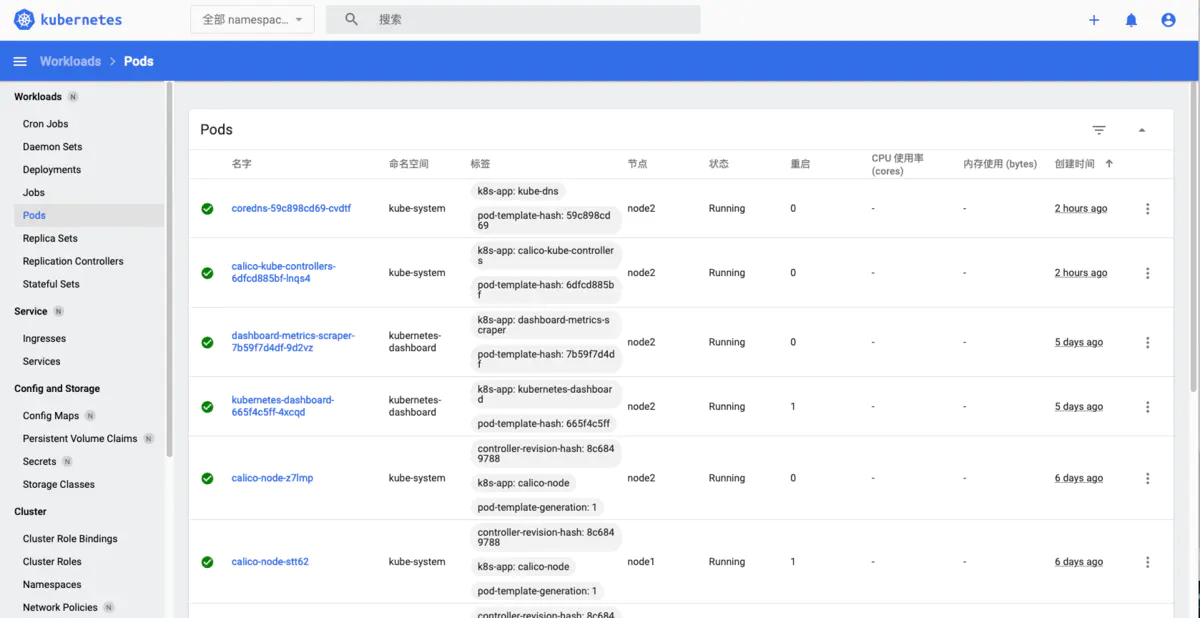

查看dashboard所在node 可以用

kubectl get pods -A -o wide,然后找到dashboard 所在的node节点.

使用token 登陆,获取token:kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

关于dashboard的pod一直处于pending状态:

kubectl delete -f recommended.yaml

vi recommended.yaml

将所有

nodeSelector:

"kubernetes.io/os": linux

注释掉

kubectl apply -f recommended.yaml

为匿名用户绑定管理员权限

kubectl create clusterrolebinding test:anonymous --clusterrole=cluster-admin --user=system:anonymous